Ratings: data/code_quality_preferences_n1000.jsonl

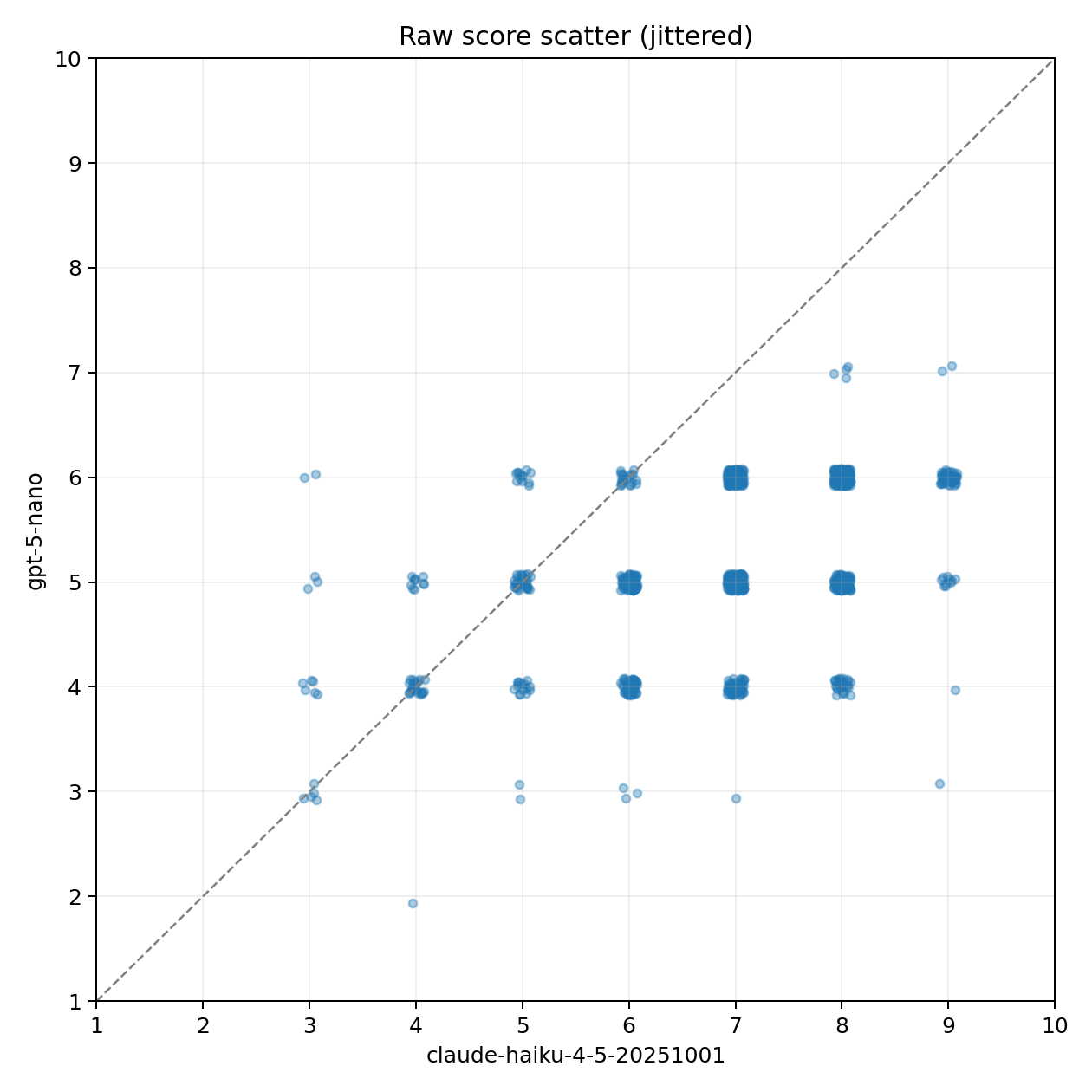

Models: claude-haiku-4-5-20251001 vs gpt-5-nano; n: 1000

| metric | value |

|---|---|

| raw Pearson | 0.468 |

| raw Spearman | 0.458 |

| raw MAE | 1.910 |

| mean claude-haiku-4-5-20251001 − gpt-5-nano | 1.828 |

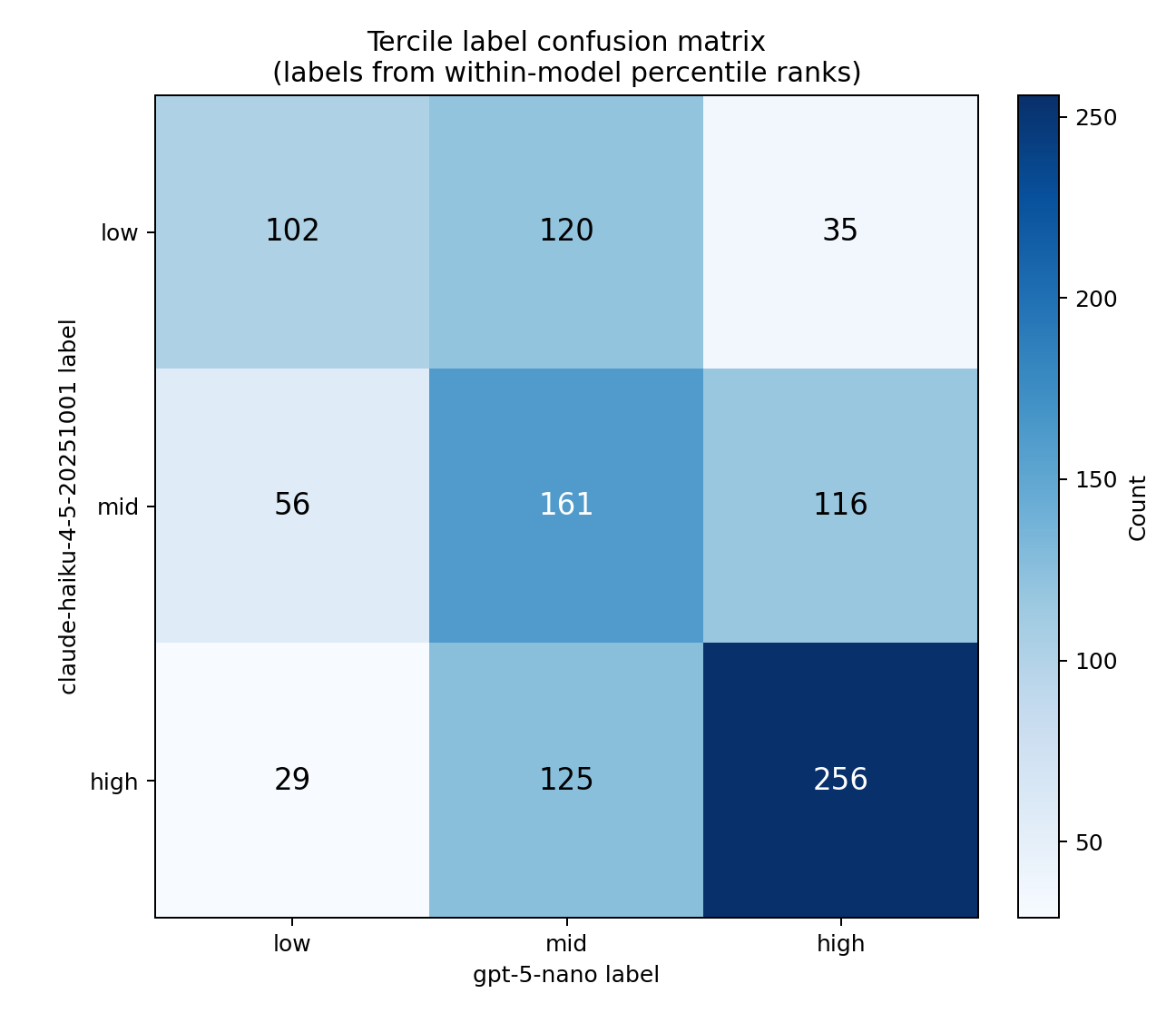

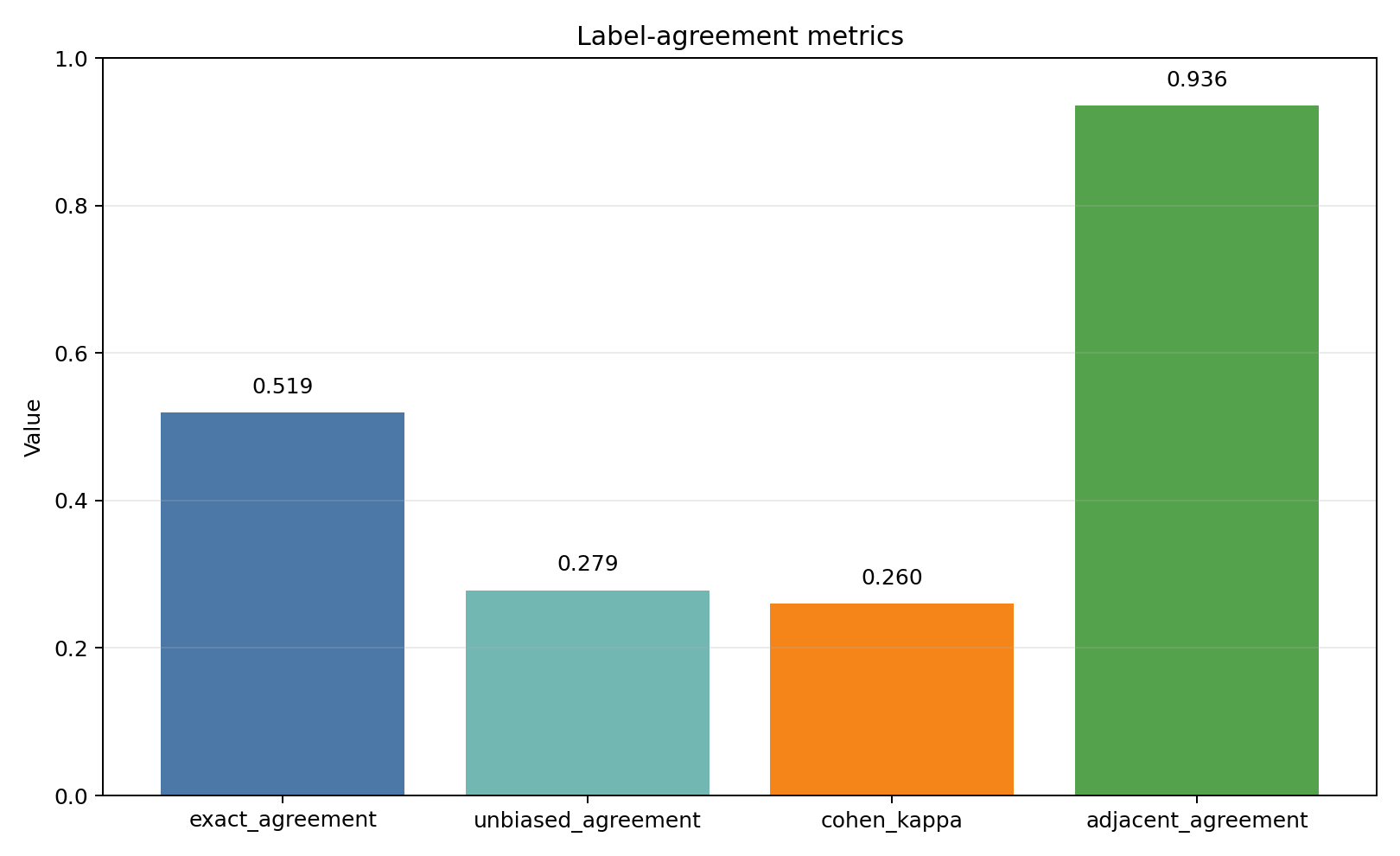

| tercile exact agreement | 51.9% |

| tercile unbiased agreement | 0.279 |

| Cohen's kappa | 0.260 |

| adjacent agreement | 93.6% |

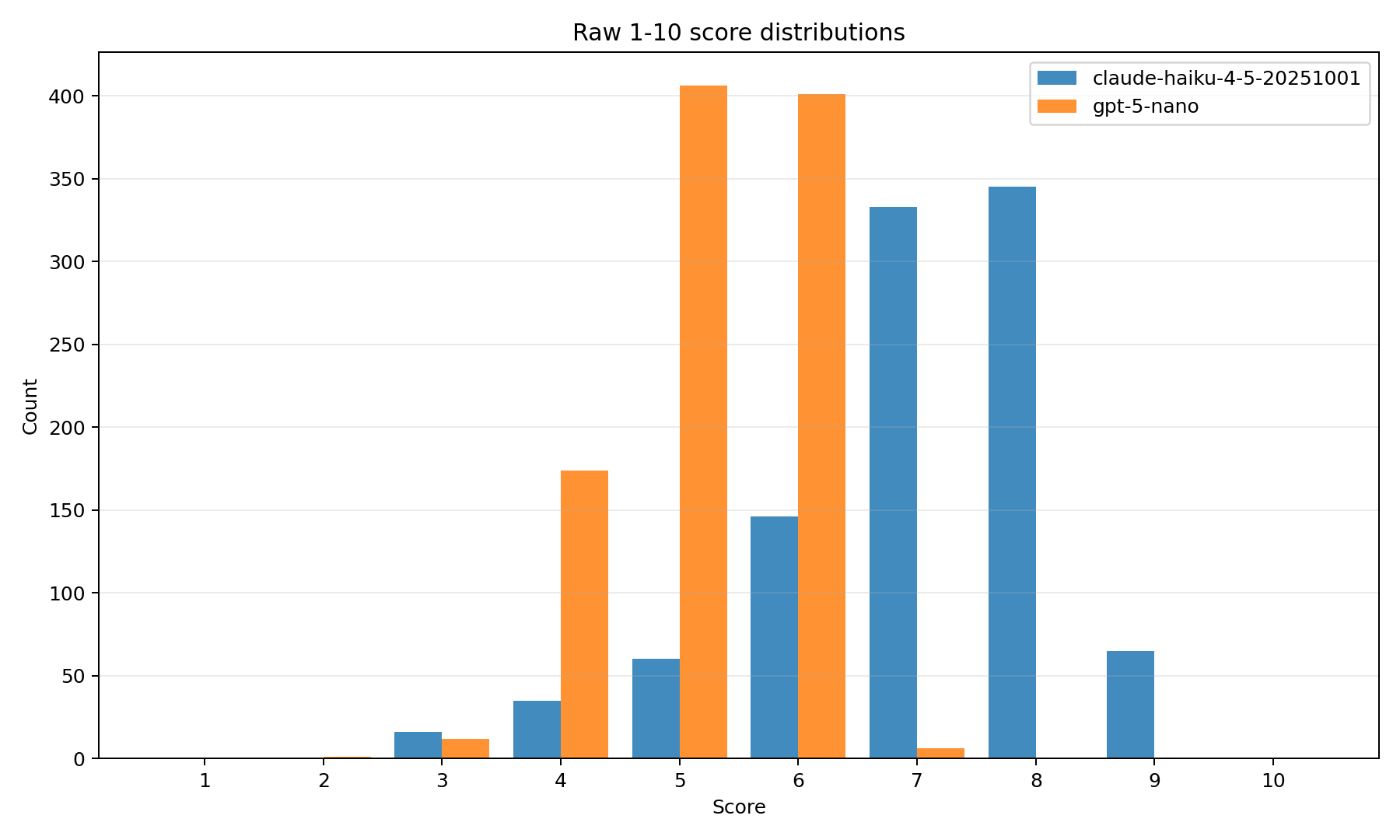

| model | mean | stdev | min | max |

|---|---|---|---|---|

| claude-haiku-4-5-20251001 | 7.040 | 1.250 | 3.0 | 9.0 |

| gpt-5-nano | 5.212 | 0.782 | 2.0 | 7.0 |

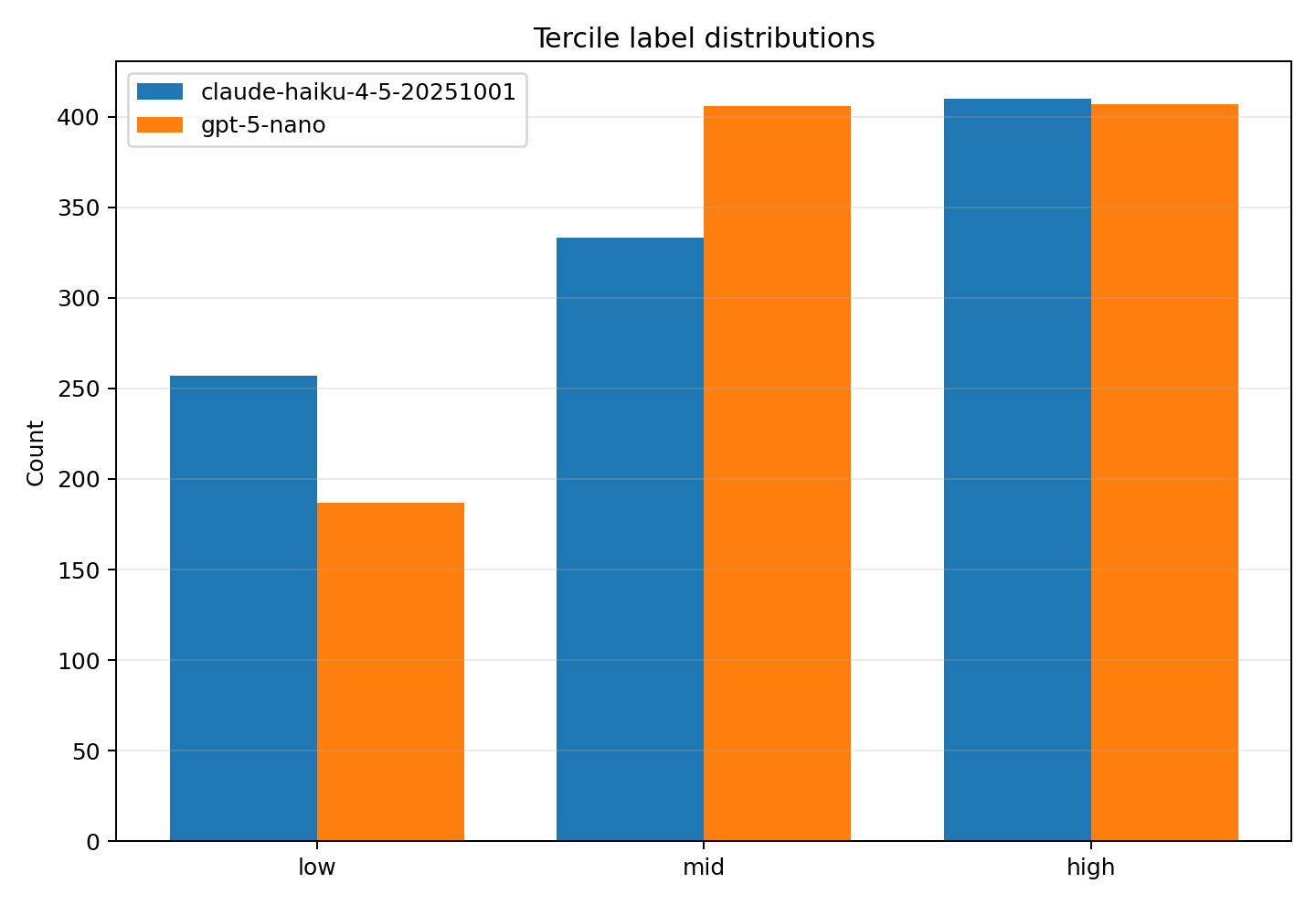

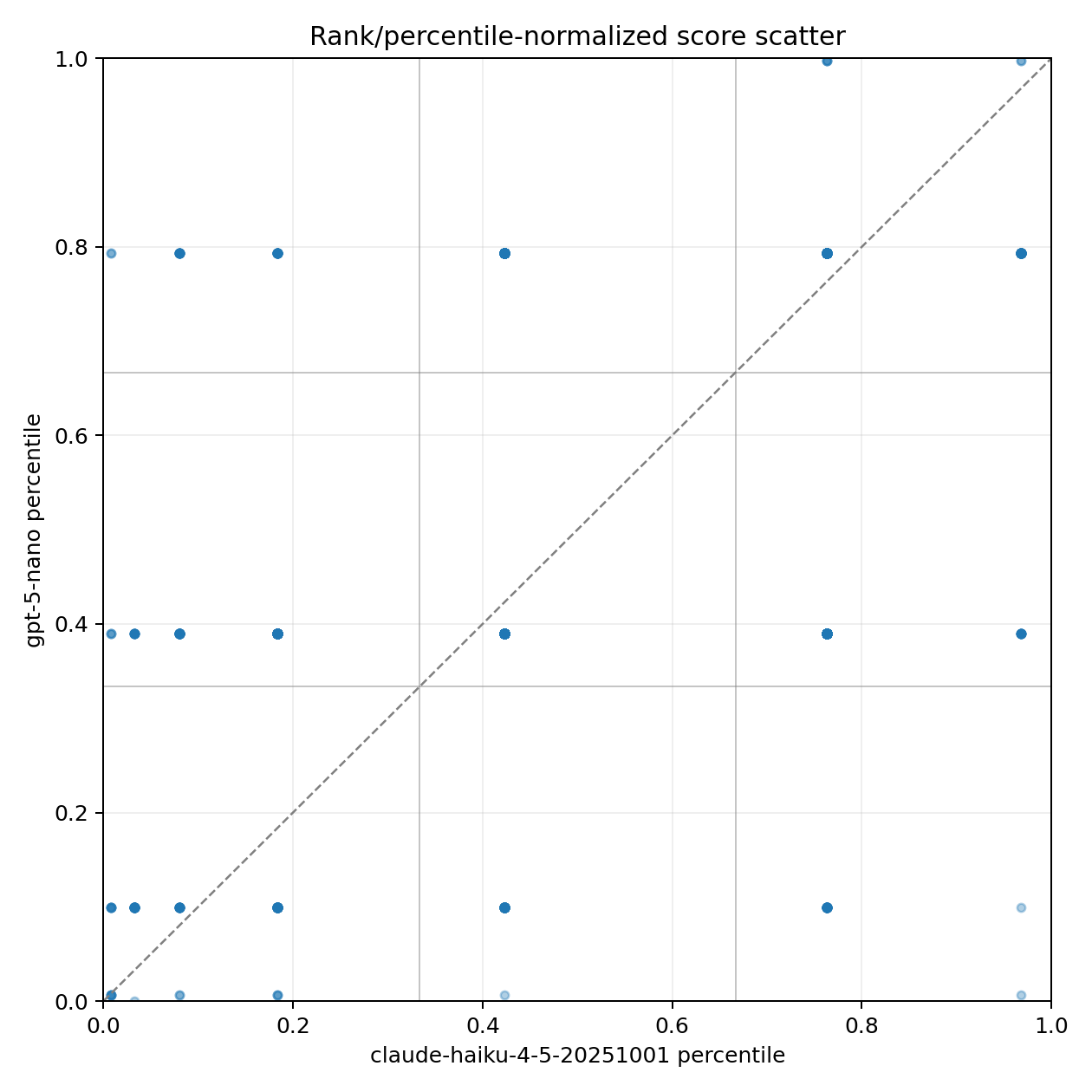

Percentile normalization ranks each judge's own scores from 0 to 1, so this removes scale bias such as one model being harsher overall.

Labels are made from each model's percentile scores: bottom third = low, middle third = mid, top third = high.